Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

You probably remember the first time a smart assistant actually helped you finish a project – I do. In early 2026 I used Gemini 3 inside Google Docs to draft a multimedia lesson plan, and it felt less like a tool and more like a teammate. This is the promise of next-gen AI Tools. In this post, you’ll learn what Gemini 3 in 2026 is, how it works, and the new pricing plans. We will explore how these AI Tools and API access are reshaping education, creativity, and business workflows.

In 2026, Gemini 3 is the defining AI release for mainstream users because it shows up where you already work: the Gemini app, Google Search (including AI Mode), and enterprise tools like AI Studio, Vertex AI, and Gemini Enterprise. Instead of feeling like a separate chatbot, Google Gemini is becoming a daily layer across education, creativity, and business workflows.

I felt this shift the first time you use Gemini 3 inside Google Docs to prep a lesson plan: you paste a rough outline, add a photo of a worksheet, and it helps you rewrite instructions, generate examples, and check reading level. I’ll admit a small hiccup—switching modes at first was confusing (I kept wondering why results changed). In 2026, that’s often because Gemini Flash is the default model in the Gemini app and Search AI Mode, optimized for fast inference and quick “good enough” drafts.

What makes Gemini 3 stand out is Multimodal AI: you can work across text, images, and even text-to-video model workflows (with tools like Veo 3) without jumping between platforms. For developers, the Gemini API (an application programming interface) brings the same capabilities into products, including tiers like gemini pro, gemini 3 flash, and references to gemini 2.5 pro and opencode gemini in the wider ecosystem.

Sundar Pichai: “Gemini 3 demonstrates Google’s commitment to safe, helpful AI that works across text, images, and video.”

Gemini (often written as Google Gemini) is Google and DeepMind’s family of multimodal AI foundation models and services. In 2026, you can think of gemini as the “engine” behind many AI features across Google products—built to understand and generate more than just text.

The Gemini brand covers multiple model versions and tiers. Gemini 3 is one major 2026 release within that family, while Gemini 2.5 Pro was a widely used performance benchmark before Gemini 3 arrived.

Unlike older chatbots that mainly handle text, Gemini is designed to work with several inputs and outputs natively:

Gemini connects tightly with Google tools, which boosts real-world success for students, educators, and teams:

Common tiers include Gemini Pro, Gemini Flash (and Gemini 3 Flash), plus enterprise options.

Demis Hassabis: “Multimodal foundation models like Gemini aim to combine scientific-grade reasoning with practical tools for everyday users.”

Gemini 3 is Google Gemini’s 2026 flagship multimodal model, built to help you solve real tasks with deeper reasoning and faster output. In Google’s 2026 release notes and blog posts, Gemini 3 is positioned as a major step forward in success for learning, creativity, and business workflows—especially when you need one model that can handle text, images, audio, code, and video in one place.

Jeff Dean: “Gemini 3 brings frontier intelligence closer to real-world applications — faster and more reliably than before.”

Unlike older assistants that mainly focus on chat, gemini 3 is natively multimodal across Google surfaces. You can use it in the Gemini app, Search AI Mode, and via the Gemini API (an application programming interface) in AI Studio and Vertex AI.

In 2026 testing, Gemini 3 reportedly outperforms Gemini 2.5 Pro on multiple benchmarks, including visual reasoning, scientific knowledge, and coding. You’ll also see tiers like Gemini Flash (and Gemini 3 Flash) for speed, plus “Deep Think” for advanced reasoning in higher plans (including AI Ultra).

| Item | Details |

|---|---|

| Release year | 2026 |

| Deployed across | Gemini app, Search, API, AI Studio, Vertex AI, Gemini Enterprise |

| Benchmark gains | Visual reasoning, scientific knowledge, coding (qualitative improvements) |

In 2026, Gemini 3 stands out because you can work in one flow across text, images, code, audio, and video. This Multimodal AI setup helps you study, teach, and build faster—whether you’re summarizing a lecture recording, debugging code, or explaining a diagram.

Sundar Pichai: “The combination of speed and multimodality in Gemini 3 enables new workflows across education and enterprise.”

Gemini Vids is the text-to-video model capability that turns prompts into short clips, useful for class explainers, product demos, and social content. You can iterate quickly by adjusting style, length, and key scenes—without switching tools.

Gemini Flash (including gemini 3 flash) is positioned as the fast default model in many product surfaces, including Search AI Mode. For deeper work, you move up tiers:

Gemini 3 adds Visual Layout and Dynamic View so you can get interactive widgets (tables, checklists, planners) inside apps. As Demis Hassabis notes:

Demis Hassabis: “Gemini’s generative UI elements open up fresh ways of interacting with AI-powered content.”

Deep integration with Google Workspace (Gmail automation, Docs drafting, Sheets analysis) plus Camera search, Google AI Lab, AI Studio, and Vertex AI makes the gemini api and application programming interface workflows more practical, with improved safety, lower sycophancy, and better prompt-injection resistance in 2026.

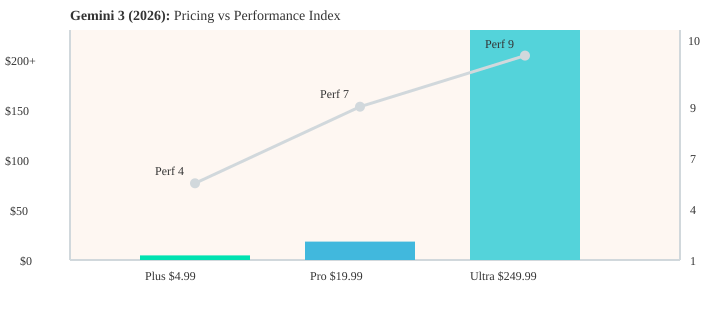

In 2026, Google Gemini keeps pricing simple with three public tiers. Your choice depends on how often you use Gemini 3, whether you need higher limits for the Gemini API (an application programming interface), and how serious your multimodal work is (images, audio, and text-to-video model workflows like Veo 3).

| Plan | Price (2026) | Best for |

|---|---|---|

| Google AI Plus | $4.99 / month | Students & casual use |

| Google AI Pro | $19.99 / month | Power users, devs, small teams |

| Google AI Ultra | $249.99 / month | Research & enterprise-grade needs |

Look for a Gemini Pro student offer or education licensing via Gemini Enterprise (pricing varies). Because Ultra is costly, you should trial Plus or Pro first.

Sundar Pichai: “We designed tiered plans so students and creators can access Gemini’s power affordably while enterprises can scale with Ultra.”

Jeff Dean: “AI Ultra unlocks experimental Deep Think for advanced research and safety testing.”

In 2026, you can build on Google Gemini through the gemini api, an application programming interface that gives you multimodal endpoints for text, code, image, audio, and video. This makes gemini 3 practical for real apps—not just demos.

For enterprise teams, Vertex AI and AI Studio are the main paths to customize, evaluate, and deploy Gemini 3 with governance. You can prototype prompts in AI Studio, then ship to production on Vertex AI with monitoring and access controls.

Jeff Dean: “APIs and Vertex integration make it possible for developers to deploy Gemini models at scale across products.”

Your pricing tier affects rate limits: Gemini Pro increases API throughput, while Ultra is prioritized for heavy workloads and higher concurrency in 2026. Start in Plus or Pro, then scale when you see success.

Use opencode gemini community SDKs and the official docs: ai.google.dev/gemini-api/docs/gemini-3 (check release notes for changes).

You can build a student study app that calls the API for lecture summarization, then generates short explainer clips with Gemini Vids, orchestrated via Gemini Agent.

In 2026, Gemini 3 is built for AI for education because it blends multimodal reasoning with tight Google Workspace integration. You can move from notes to slides, from images to explanations, and from prompts to polished outputs—without switching tools.

If you qualify for the Gemini Pro student offer in 2026, start on Plus or gemini pro for longer context and better reasoning; use gemini flash / gemini 3 flash for quick drafts.

“When used thoughtfully, multimodal AI can amplify teaching without replacing the teacher.” — Annie Murphy Paul

You can use google gemini for research briefs, code prototyping via the gemini api (application programming interface), and data insights in Sheets. In Gmail, it can draft client emails with your tone and next steps.

For schools, Gemini Enterprise and Workspace admin controls help manage data access and FERPA-like compliance. Example: a professor used Gemini Vids to create a 3-minute recap video and cut prep time by half (illustrative).

In 2026, searches like perplexity vs gemini and perplexity vs chatgpt vs gemini usually come down to one question: do you need multimodal creation, workflow chat, or search-first answers?

| Area | Gemini 3 | ChatGPT | Perplexity |

|---|---|---|---|

| Visual reasoning | 9 | 6 | 5 |

| Coding capability | 8 | 8 | 5 |

| Multimodal handling | 9 | 6 | 5 |

| Speed (low latency) | Gemini Flash: 9 | Varies by tier | Fast for search |

Match Google’s pricing (Plus/Pro/Ultra) against openai pricing and Perplexity plans, then check limits for the gemini api (application programming interface), Vertex AI, and enterprise controls. If you need fast responses, gemini flash or gemini 3 flash is built for low latency; if you need model depth, compare gemini 2.5 pro access and quotas.

Sundar Pichai: “We see different platforms playing complementary roles; Gemini aims for deep multimodal coverage tied to Google services.”

Sam Altman: “Competition across models pushes better safety and performance for all users.”

In 2026, google gemini isn’t one model—it’s a lineup you pick based on speed, cost, and reasoning depth. As Jeff Dean puts it:

“Choosing the right model is about matching latency, cost, and reasoning depth to the task at hand.”

Google surfaces Gemini Flash by default for speed. For higher performance, you request tiers in Vertex AI or the gemini api (application programming interface):

model="gemini-flash" | "gemini-pro" | "gemini-3-deep-think"

Developer note: model choice drives pricing and latency—watch for experimental terms like Antigravity Framework and Nano Banana in SEO chatter, but ship with stable tiers for success.

In 2026, Google Gemini and Gemini 3 rollouts put safety and transparency first, especially as the Gemini API (application programming interface) powers education, business, and text-to-video model workflows like Veo 3. Google’s approach uses layered safety tests, reduced “sycophancy” (agreeing too much), and stronger resistance to prompt-injection attacks—key for real-world success.

Sundar Pichai: “Safety is a core priority as we expand Gemini’s capabilities across products.”

Deep Think is gated in 2026 for controlled testing and high-trust research, typically tied to Google AI Ultra. This is responsible access control: you get advanced reasoning, but with tighter review and safer deployment paths.

Google publishes ongoing release notes documenting model changes and safety updates: https://gemini.google/release-notes/. Check them before you ship updates to a Gemini API integration.

No model is perfect in 2026; human review remains essential for accuracy and compliance.

Annie Murphy Paul: “The right AI tool complements teaching; expensive upgrades aren’t always necessary.”

My take: I kept Pro for daily work, and only used Ultra for big research experiments. Upgrade when you hit speed limits (Flash) or need deeper “Deep Think” depth and higher usage via the Gemini API.

In 2026, Is Gemini 3 worth it depends on how often you use multimodal workflows (docs + images + video) and how tightly you work inside Google Workspace. Google Gemini is more mature this year, with smoother integrations, faster tiers like Gemini Flash, and broader Gemini API access for real projects.

Jeff Dean: “Evaluate AI tools by the value they add to workflows — not by hype alone.”

If you’re scaling across many users or apps, consider the application programming interface via Gemini API and Vertex AI instead of paying Ultra per seat.

Start with Plus or trial Pro, look for a Gemini Pro student offer, and only upgrade after you confirm success in your real workflow. If you only need basic chat, Pro/Ultra may be overkill. Try the lowest-cost path, test the API sandbox, and read release notes before upgrading.

In 2026, Gemini 3 stands out because you can use one Google AI system across learning, creative work, and business—often right inside Google Workspace. You get strong multimodal skills (text, images, and more), fast responses with Gemini Flash (including Gemini 3 Flash), and clear tiered pricing that matches your needs. If you’re comparing perplexity vs gemini or perplexity vs chatgpt vs gemini, the big difference is how tightly Google Gemini connects to Google tools and how flexible the Gemini API is for real apps.

Sundar Pichai: “We’re excited to see how users will leverage Gemini 3 to create new value in education and business.”

My practical advice for 2026: start small. Test Google AI Plus ($4.99) or trial Gemini Pro ($19.99) before you commit to Google AI Ultra ($249.99). I kept Gemini Pro for daily writing, lesson planning, and analysis, but I reserve Ultra for special research runs—like heavier multimodal experiments or long-context work.

Next steps: sign up and run one workflow end-to-end (study notes, a slide outline, or a prototype using the application programming interface). If you’re a developer, open the docs and ship a small feature; if you’re an institution, request an Enterprise trial and review safety controls before deploying at scale. Always check release notes—models like Gemini 2.5 Pro, Veo 3, and any text-to-video model updates can change behavior.

Resources for 2026: AI Studio, Vertex AI, Gemini API docs, and release notes. Try, measure, iterate—and share your Gemini 3 success or questions in the comments.

TL;DR: Gemini 3 (2026) is Google’s leading multimodal AI: fast (Gemini Flash), capable (text, image, audio, video), available via API and apps, with tiers from $4.99 to $249.99 — ideal for students, creators, and enterprises that need advanced reasoning and tight Google Workspace integration.