Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

You might have first noticed Meta AI in a chat bubble on Instagram or a suggestion on WhatsApp. In 2026, Meta AI is no longer an experiment; it is one of the most integrated AI Tools woven into apps, glasses, and ad tools. This post walks you through Meta AI in 2026—what it does, how it works, and the new pricing tiers. We will explore why these AI Tools matter for creators and everyday users, moving beyond simple chat to hands-free utility on Ray-Ban Stories and WhatsApp.

If you’re asking what is meta ai in 2026, think: a daily helper for meta ai chat, quick search, and hands-free help on meta ai glasses. On your commute, meta glasses can read a message, summarize a long conversation, and translate a sign—then your meta ai assistant drafts a reply in WhatsApp Meta AI.

If you sell or create, 2026 is about goal-only campaigns. Meta plans to fully automate advertising by end of 2026, using Advantage+ and Meta Lattice to pick audiences, creatives, and budget. You focus on the product story; ai meta handles the knobs.

You’ll watch meta ai news and ai news today for how www.meta ai labels AI media with a watermark, and how your data and biography-style personalization are handled.

Dr. Lina Garcia, Meta Research Lead: “Meta AI’s multimodal focus is designed to make everyday tools feel anticipatory, not intrusive.”

| Metric | 2026 Data Point |

|---|---|

| Advantage+ Shopping | 22% higher ROAS |

| DINOv3 | 1.7B images; up to 7B parameters |

| Meta Quest 3 | 2x GPU; 30% more memory vs Quest 2 |

If you follow ai news today, you’ve seen how meta ai moved fast. In 2026, it’s no longer a lab demo—it’s productized across meta ai chat, search, and wearables, so you meet it where you already spend time: WhatsApp, Instagram, and the meta ai app (plus web experiences like www.meta ai).

Here’s a quick real-life moment: you finish a hallway meeting, tap your meta ai glasses, and ask for a summary and next steps. Your glasses capture audio, your meta ai assistant turns it into action items, and you send it as a message—hands-free. That’s the shift in 2026: AI as a daily assistant across social and wearable contexts.

Ethan Moore, Product Manager at Meta: “In 2026 you won’t just ‘use’ AI — it’ll be part of how your apps and glasses understand the world around you.”

Meta’s edge is multimodal learning—text, image, meta ai video, and audio—powered by research like SAM 3 and DINOv3. And yes, ai meta is now a service with clear tiers: Free ($0) and Subscription ($10/month).

What is Meta AI in 2026? It’s Meta’s suite of AI models, products, and services that power conversation, vision, audio, and personalization across apps you already use. Think of meta ai (or ai meta) as an “AI layer” built into WhatsApp, Instagram, the meta ai app, and meta ai glasses—so you can get help without learning machine learning.

Meta combines LLaMA family models (language AI for chat, writing, and reasoning) with vision and media models like DINOv3 (image understanding) and SAM 3 (smart selection/segmentation for images and meta ai video workflows). It also uses SeamlessM4T v2 to improve speech and text translation.

Multimodal learning text means Meta AI can work with text + image + video + audio together, helping you go from a prompt to a caption, edit, or translation in one flow.

Dr. Priya Natarajan, AI Systems Researcher: “Meta AI’s strength is not one model—it’s the orchestration of many specialized models into useful features.”

In 2026, the meta ai assistant blends meta ai chat, contextual search, and proactive suggestions so you can get things done inside the apps you already use. Instead of jumping between tabs, you ask in plain language and it responds with steps, links, and drafts—powered by natural language processing and reasoning for multi-step tasks.

Plan an IG Reel shoot next weekend (shot list, timing, props, and reminders).You can start on WhatsApp, continue on the meta ai app or web (see www.meta AI surfaces), and finish hands-free on meta glasses. SeamlessM4T v2 improves multilingual conversation and translation consistency.

Conversation history and ephemeral memory let you personalize replies with controls to limit what’s stored. AI media can include watermark and provenance tags for safer sharing.

Alex Chen, UX Lead, Meta Assistant: “The goal is for the assistant to feel like a helpful teammate—drafting, suggesting, and protecting your privacy.”

In 2026, whatsapp meta ai works inside your conversations and accepts text, voice, and images. You can ask the meta ai assistant to translate messages, summarize long threads, and transcribe voice notes so you can scan updates fast.

With meta ai instagram, you get AI-assisted captioning, Reel edit suggestions, and context-aware sticker and music picks that match your clip. Creators can also generate meta ai video assets from images or restyle existing videos—like auto-reframe for Stories vs. Reels.

Maya Santiago, Head of Creator Partnerships: “Creators see faster ideation — AI suggests formats and assets tailored to platform specs.”

The meta ai app and web dashboard centralize prompts, drafts, and analytics, with an ad creation assistant and AI sandbox for testing brand-consistent variations. For advertisers, Advantage plus automation supports Advantage+ Shopping, cited as driving 22% higher ROAS. Meta Lattice—trained on trillions of ad signals—powers smarter discovery, and Meta plans real-time personalization by location/device, aiming for full ad automation by end of 2026. See updates on www.meta and meta.com/blog.

With permission, cross-platform context sharing keeps relevant conversation details so your meta ai chat stays consistent across apps, with subscription users getting priority features.

In 2026, meta ai is moving off your phone and onto your face. Meta AI glasses (including newer Ray-Ban Stories variants) give you hands-free voice activation, real-time summaries, and on-device transcription for quick notes and captions—useful when you’re in a noisy street or mid-conversation.

Dr. Samuel Osei, Head of Wearables Research: “Wearables are where immediacy meets helpfulness — users expect quick, context-aware answers without digging out a phone.”

Meta balances cloud power with local processing for privacy-sensitive tasks, plus a physical shutter for trust. Battery life and all-day comfort still matter, so features may scale based on what you’re doing.

With Meta Quest 3 (2x GPU power and 30% more memory vs Quest 2), AR overlays and AI hooks feel smoother. Developers can tap SDKs (AR overlay APIs + AI stack) to build experiences like:

You’re walking; the glasses whisper a reminder and suggest a short IG Reel based on the scene.

At CES 2026, Meta also teased display teleprompters and handwriting EMG—signals that ai meta wearables are expanding fast.

In 2026, meta ai pricing is simple: a free tier for everyday use and a paid tier for power users in the meta ai app. As Nora Patel, VP of Product Monetization, says:

“A simple two-tier model lets everyday users try AI for free while offering creators predictable premium features.”

Subscription: $10 / month also unlocks premium wearable features in 2026 for meta ai glasses/meta glasses, like continuous transcription and longer memory windows during a conversation (availability varies by region).

You can often try subscription features for 7–14 days inside the app—check regional availability and www.meta account settings.

| Tier | Price | Best for | Key limits/perks |

|---|---|---|---|

| Free | $0 | Casual users | Lower limits, standard speed, watermarking |

| Subscription | $10/mo | Creators & wearables users | Faster, higher limits, premium glasses features |

Business note: ad automation via Advantage+ and Meta Lattice integrations may use separate billing models.

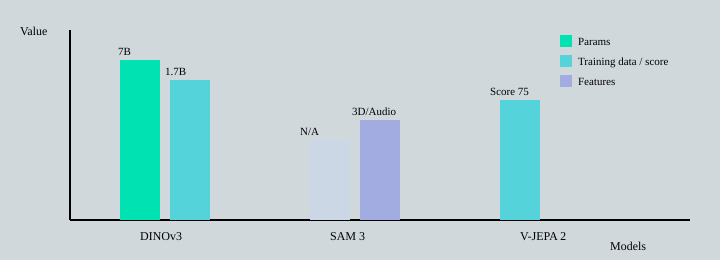

In 2026, Meta AI research is what makes your meta ai assistant, meta ai chat, and meta ai video tools feel “smart” across WhatsApp, Instagram, the meta ai app, and even meta ai glasses. On www.meta ai updates and meta ai news (and broader ai news today), three projects stand out.

SAM 3 extends universal segmentation to SAM 3D and SAM Audio, so you can isolate objects in images, shaky clips, and even sound sources. A creator told us SAM 3 made background-removal on a shaky video effortless—no frame-by-frame masking.

The DINOv3 vision model is DINOv3 self supervised, trained on 1.7B images with up to 7B parameters, boosting zero-shot recognition—useful for on-device understanding in meta glasses and faster tagging in meta ai instagram.

Dr. Helena Ruiz, Computer Vision Lead: “DINOv3 and SAM 3 together close the gap between static image understanding and dynamic, real-world video tasks.”

| Model | Data footprint | Params | Core capability |

|---|---|---|---|

| SAM 3 | N/A | N/A | Universal segmentation video + 3D/audio |

| DINOv3 | 1.7B images | Up to 7B | Zero-shot visual understanding |

| V-JEPA 2 | N/A | N/A | Video tracking memory + consistency |

These advances also power generative creator tools like AI Sandbox and image-to-video experiments—so “what is meta ai” becomes practical, not just hype.

In 2026, meta ai is reshaping ads with the Meta Lattice machine, a machine learning system trained on trillions of ad signals. For you, that means smarter audience discovery and more conversion-focused delivery across the Meta ecosystem (think meta ai app, meta ai instagram, and whatsapp meta ai placements).

Meta reports Advantage+ Shopping drives about 22% higher ROAS than manual setups—one reason Advantage plus automation is becoming the default for beginners and busy creators.

Meta says it aims for Fully automated advertising by the end of 2026: you provide goals, a URL, and a budget, and AI builds the full campaign. Ads can even personalize creative in real time by weather, location, and device, supporting consistent branding while reducing ad-ops work.

Jordan Lee, Head of Ads Product: “Automation shifts the advertiser’s job from micro-managing to setting goals and letting models optimize delivery.”

| Signal/Plan | 2026 Impact |

|---|---|

| Advantage+ Shopping | ~22% higher ROAS vs manual |

| Meta Lattice machine | Trained on trillions of ad signals |

| Automation roadmap | Goal-only ads by end of 2026 |

| AI creative | Personalizes by location/device |

In 2026, choosing between meta ai, Google AI, and ChatGPT depends on what you do every day: social posting, search, or deep conversation. These tools overlap, but their ecosystems push you in different directions—especially around platform lock-in and cross-platform APIs.

Simone Alvarez, Tech Analyst: “Each AI ecosystem has a specialty—Meta leads in social integration and ads, Google in search infrastructure, and OpenAI in broad conversational models.”

Check meta ai news and ai news today for updates on privacy, watermark policies, and what’s available via www.meta AI surfaces.

If you follow meta ai news, you’ll notice frequent 2025–2026 updates around Meta AI glasses (CES 2026 Ray-Ban display and teleprompter-style prompts), SAM 3 extensions, and ad automation roadmaps across Instagram and WhatsApp Meta AI. For official releases, monitor www.meta and ai.meta.com.

In 2026, meta ai privacy focuses on user control: ephemeral memory (temporary context), clearer consent flows, and optional on-device processing on wearables where available—useful when you’re using the meta ai assistant on meta glasses.

Priyanka Sharma, Head of Privacy Compliance: “Transparency and provenance are core—users deserve to know what’s AI-generated and how their data is used.”

Meta applies a watermark and provenance tags to meta ai video and other AI-generated media across tiers, helping you spot AI content and reduce confusion.

SeamlessM4T improvements also aim for consistent speech/text translation, which supports trust in cross-language conversations. Region-specific rules may limit features or delay rollouts in 2026.

In 2026, meta ai helps you prep a Reel in 10 minutes: auto-captions, image-to-video restyling, and AI-powered writing that turns a rough biography into platform-ready copy. You can also generate an AI generated product demo clip with consistent colors, fonts, and tone across campaigns.

Liam Rivers, Creator and Early Adopter: “I cut my editing time in half—AI suggests cuts, captions, and even soundtrack choices tailored to platform specs.”

With whatsapp meta ai and meta ai instagram, you can ask for contextual summaries of long conversation threads, translate DMs, and get smarter feed recommendations using AI powered personalization. This is where ai meta feels like a daily utility, not a toy.

meta glasses and meta ai glasses add real-time captions, landmark IDs, and quick replies while walking. Pair that with the meta ai assistant for schedule nudges and on-the-go summaries in the meta ai app (see www.meta for updates).

Next step: try meta ai chat free, then test the $10/month premium assistant for creator tools and automation.

In 2026, meta ai (or ai meta) stands out because it’s built into the places you already post, chat, and sell. But the same convenience can raise privacy and portability questions—so you should weigh automation against control.

Elena Voss, Independent Privacy Advocate: “Tools that add convenience must be balanced with clear privacy defaults and easy opt-outs.”

Is Meta AI worth using in 2026? For most people, yes—especially if you create content, run ads, or live inside WhatsApp Meta AI and Meta AI Instagram. This Meta AI 2026 overview comes down to workflow fit, privacy comfort, and your Business goal budget.

Victor Nguyen, Small Business Owner: “Switching to AI-driven ads saved me hours of setup each week—ROI improved once I tuned the goal inputs.”

If privacy is your top concern, review settings, prefer on-device features when possible, and follow ai news today and Meta AI news for regional changes. Use watermark indicators on generated media and export your content to reduce risk.

For businesses, plan for goal-only automation targeting end of 2026; Advantage+ can lift ROAS by 22% when inputs are clean.

In 2026, meta ai stands out because it turns research into daily tools: breakthroughs like SAM 3 and DINOv3 show up in the meta ai assistant, meta ai chat, meta ai app, whatsapp meta ai, and meta ai instagram, plus meta glasses and meta ai glasses. This Meta AI 2026 overview also highlights how Meta blends research breakthroughs with consumer features in 2026—useful, but worth testing with your own privacy comfort level (think watermark and data controls).

Aisha Bello, Community Manager: “Try it yourself — real-world tests reveal the meaningful time savings and creative boosts Meta AI offers.”

CTA: Download the meta ai app, try the $10/month plan, check meta ai glasses compatibility, then comment—did ai meta save you time or raise privacy questions?

Dr. Lina Garcia, Meta Research Lead: “AI should augment curiosity, not replace it.”

Keep that line in your head when you use meta ai as a creator in 2026. The best meta ai assistant moments don’t write your whole biography for you—they spark a better conversation, a sharper question, or a faster first draft you still shape.

Picture a normal morning: you step outside, and your meta ai glasses notice a flooded street. They reroute you, then suggest a quick meta ai instagram Reel idea about urban resilience—caption options, a shot list, and a reminder to credit sources. That’s ai meta working like a calm co-pilot, not a boss.

Think of the meta ai app, whatsapp meta ai, and glasses as a Swiss Army knife: search, chat, translation, and meta ai video tools in one place. The tradeoff is you must choose what you share, and where.

One imperfect tangent from ai news today and meta ai news in 2026: watermark and provenance systems help, but they can be gamed—watch for “clean” re-uploads, cropped frames, and missing context.

Now your turn: imagine your ideal wearable feature on www.meta AI—then email it to me. Try one tiny experiment on the Free tier today, and tell me what surprised you.

TL;DR: Meta AI in 2026 offers multimodal LLaMA-based models, two-tier pricing (Free and $10/mo), major projects like SAM 3 and DINOv3, wearables integration, and ad automation that boosts ROAS—useful for creators and everyday users.